Leveraging EuroHPC Resources for Scientific Breakthroughs: Key Outcomes and Collaborative Success from the CINECA Open Hackathon 2025

This summer, researchers from Italy, Spain, France, and Austria convened at the CINECA Open Hackathon with the objective to optimize and rigorously test their code and applications on LEONARDO, a Tier-0 EuroHPC supercomputer. As CINECA prepares to manage the future AI factory's strategic supercomputing infrastructure alongside its existing HPC capabilities, the hackathon served as a critical platform. A mix of applications, from drug discovery to machine translation and plasma physics, sought to accelerate their models on LEONARDO, which is recognized as one of the top ten most powerful supercomputers on the Top500 list.

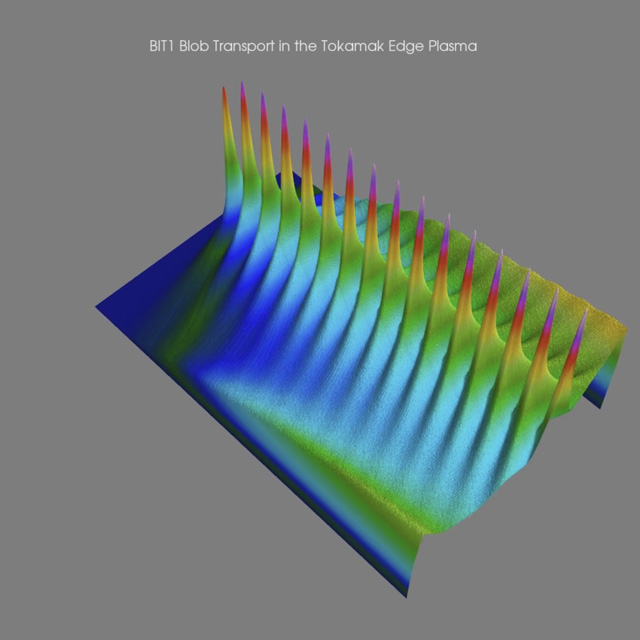

Accelerating Plasma Particle Simulations with OpenACC

BIT1 simulates the behavior of plasma particles near the edge of fusion devices, particularly along magnetic field lines near a divertor. BIT1 was, at the beginning of the CINECA Open Hackathon, a CPU-only code consisting of 170 source files and about 40 thousand code lines that required very long simulations. Equipped with access to LEONARDO and expert mentors by their side, team BSCBIT set their primary focus on porting the code to GPU.

“We successfully ported three routines of the BIT1 project to GPUs using OpenACC, reducing runtime for two of them. The hackathon provided hands-on experience, expert mentoring, and valuable knowledge that we wouldn’t have acquired on our own in such a short time”, shared Dr. Xavier Saez, Senior Research Engineer at Barcelona Supercomputing Center. With the insight gained at the hackathon, the team continues to reap the benefits of their effort in the long-term, sharing the findings with other domain experts.

“At the hackathon we built a solid foundation to continue GPU porting and scalability efforts on our own. Post-hackathon, we will validate the whole simulation for scientific accuracy to share our achievements with the BIT1 developers and the broader fusion community.”

Read the team's account of CINECA Open Hackathon’s experience on the Fusion Group’s website.

Paving Way for Affordable Italian NLP and Open Research

Some teams like team Raganato, led by Prof. Alessandro Raganato of University of Milano-Bicocca, have already started their GPU journey prior to the hackathon. Even then, the team could visualize a pathway to increased efficiencies and faster training and set out a goal to transition from a single node to a multi-node setup. With the guidance on the best practices of multi-GPU training, the team managed to reach domain standards in performance at an impressive speed.

"At the Hackathon, we scaled our small Italian↔English translation model (DIETA, a 0.5B decoder-only model) efficiently from 1 to 32 GPUs, reaching ~700 tokens per second. We show competitive performance on standard benchmarks and are laying the groundwork for >1.5B-parameter training.” Alessandro Raganato

As an outcome, the team published a paper at the Italian Conference on Computational Linguistics, CLiC-it 2025, titled ‘DIETA: A Decoder-only transformer-based model for Italian–English machine Translation’. Read the full paper here.

Simulating Turbulent Drops on Extremely Large Scale with multi-GPUs

Another team working on optimizing their code for multi-GPUs was team Turbulent drops, working on MHIT36, a phase-field code for GPU simulations of multiphase homogeneous isotropic turbulence. Written in Fortran 90, incorporating OpenACC and already adapted to multi-GPUs in Poisson solver before it was brought to the CINECA Open Hackathon 2025, the code still gained 3x speed-up thanks to improved memory management after the first round of profiling.

“By directly modifying and testing our code with the guidance of our mentors, we significantly deepened our understanding of GPU computing. Even with a working multi-GPU code, we managed to squeeze out a lot of performance and achieve an excellent speedup of more than 26x”, shared Dr. Roccon while presenting the final results of the CINECA Open Hackathon.

These results will have a tangible effect on the future development of MHIT36, as the new scale of experimentation opens up:

“These improvements allow us to use our compute resources much more efficiently, greatly reducing the wall-clock time of our simulation. We can now conduct extremely large-scale simulations in 4096³ resolution, simulating in 10 minutes what would previously take 4 hours.”

Enabling Future Development: from Machine Learning to Bioinformatics to Astrophysics

Other participating teams brought forward significant results, from GPU porting to deep code optimization, and incorporated learnings from mentors across institutions and industry.

Team CAMML explored machine learning methods to help accelerate the design of materials and molecules. Their primary goal, speeding up algorithms for equivariant message passing, was accomplished by accelerating the most demanding parts of the codebase with cuEquivariance. Benefiting from a GPU porting, the model saw over 6x speedup, which allows the team to scale to larger models, with speed approaching non-equivariant models.

In the grid we trust team worked on porting RCFDTD, an in-house Finite-Difference Time-Domain (FDTD) code used for electromagnetic analysis, from CPUs to GPU. Starting with a code that is already partially tested on GPUs, the team used the hackathon time to target the most time-consuming part and gain insights into potential code improvements, achieving a 3.5x speedup. The time at the hackathon served as a platform for future development, as the team took the time to test and optimize before full production.

Team DrugBuddies focused on drug repurposing and brought the code DREAMS, Drug Repurposing through Enhanced AI Multimodal Systems, to the CINECA Open Hackathon. To bridge the gap between molecular representations and disease phenotypes, the team integrated two pre-trained transformers, which presented opportunities for training parallelization. By the end, the team successfully implemented multi-GPU training and, using the hackathon’s learnings and technical toolkit, significantly improved the model’s throughput and learned to collaborate effectively on deep learning workflows under real-world constraints.

BRAVE team worked on MBHB-ECHO studying the response of the so-called Broad Line Region to the ionizing continuum emitted by the central source, with applications in astronomy and astrophysics. The team rewrote the whole implementation of our application by studying in detail the most time-consuming points of the code and achieved, according to Lorenzo Bertassi, “much faster progress” than they would have, working alone. “This GPU-optimized version will be the foundation for our future projects, like parameter estimation on real observations. By optimising the CPU version and successfully porting it to GPU, we obtained a x300 speedup”

A Stepping Stone towards the Future of European Research

As the hackathon came to an end, the participating teams not only celebrated the immediate performance gains, but also took home best practices and the next steps for improving their applications and codes. Through the extensive hands-on experience and expert mentorship, teams across various domains gained crucial knowledge, building a strong foundation for future, large-scale research on EuroHPC infrastructure.